AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Decision tree entropy9/7/2023

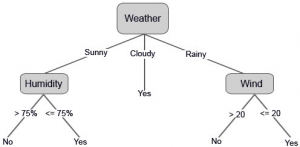

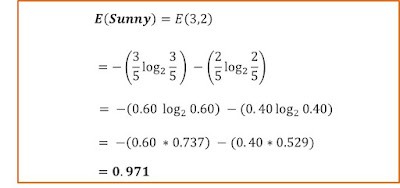

The feature with the highest information gain (i.e., the greatest reduction in entropy) is chosen as the splitting criterion. In summary, entropy is a measure of the uncertainty or randomness in a set of data, and it is used in decision tree algorithms to determine the best feature to split the data on at each node. The feature with the highest information gain is chosen as the splitting criterion at each node of the decision tree. For an example, we will use the same labeled data set as in the naive Bayes classification. The final result is a tree with decision nodes and leaf nodes. It breaks down the dataset into smaller and smaller subsets as the tree is incrementally developed.

The information gain of the split on feature A is then defined as the reduction in entropy: Decision tree builds classification or regression models in the form of a tree structure. Where Sai is the subset of examples in S that have value ai for feature A. We then calculate the entropy of the resulting subsets as: The goal is to create a model that predicts the. To calculate the entropy of a split on a feature A with possible values, we first compute the proportion pi of examples in S that have value ai for feature A. Decision Trees (DTs) are a non-parametric supervised learning method used for classification and regression. How to find the Entropy and Information Gain in Decision Tree Learning by Mahesh HuddarIn this video, I will discuss how to find entropy and information gain. Therefore, the goal of the decision tree algorithm is to find the feature that minimizes the entropy of the resulting subsets after the split. The entropy of a set S is 0 if all examples in S belong to the same class (i.e., there is no uncertainty), and it is 1 if the examples are evenly split between the two classes (i.e., maximum uncertainty). For this, the decision tree algorithm would use the. The logarithm base 2 is used to measure entropy in bits. The aim of the decision tree in this type of problem is to reduce the entropy of the target variable. Where p1 and p2 are the proportions of positive and negative examples in S, respectively. The entropy of a set S with respect to a binary classification problem (i.e., two possible outcomes) is defined as: In the context of decision trees, entropy is used to quantify the amount of uncertainty or disorder in the target variable (i.e., the variable we are trying to predict). One important aspect of decision tree algorithms is the calculation of entropy, which is used to determine the best feature to split the data on at each node of the tree.Įntropy is a measure of the impurity or randomness of a set of data.

It is one of the very powerful algorithms. It is a versatile supervised machine-learning algorithm, which is used for both classification and regression problems. It works by recursively partitioning the data into subsets based on the values of the input features, until a stopping criterion is met. A decision tree is a flowchart-like tree structure where each internal node denotes the feature, branches denote the rules and the leaf nodes denote the result of the algorithm. A decision tree is a popular machine learning algorithm used for classification and regression tasks.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed